Generating Architectural Renderings with AI

Zachary Robinson, Kayla Marindin, & Sabri Gokmen

stable diffusion, rendering, architecture

Participating in the Stable Diffusion Workshop was a transformative experience that introduced us, Zachary Robinson and Kayla Marindin, to new ways of integrating artificial intelligence into the early stages of architectural design. As students who value iterative exploration and conceptual depth, we were excited to use AI not just as a rendering tool, but as a creative partner that could expand the visual and formal language of our work. Throughout the workshop, we developed a deeper understanding of how text-to-image diffusion models can bridge abstract conceptual ideas with visual output, enabling a highly intuitive and responsive design process.

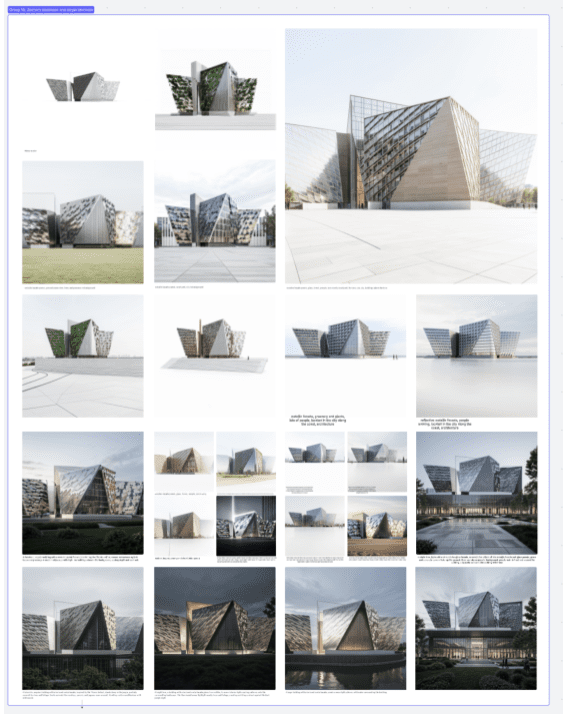

For this collaborative project, we used AI as a tool to investigate the spatial and material potential of a civic-scale building composed of folded geometries. We first used Rhino and Grasshopper to model the Titanic Belfast and chose a base view for the renders. Early prompts centered around themes such as “crystalline forms,” “reflective façades,” “folded metallic panels,” and “biophilic surfaces.” These descriptors allowed us to quickly generate a wide array of variations that explored different interpretations of light, form, and materiality. The immediacy of the AI’s feedback helped us push our design further than we initially anticipated, giving us the freedom to iterate without being constrained by time-intensive modeling workflows.

One of the most valuable aspects of using AI in this context was its ability to spark unexpected design outcomes. Rather than using it to confirm pre-conceived ideas, we allowed the AI to introduce new formal directions that we may not have considered on our own. For example, one image generated a particularly compelling juxtaposition between smooth wooden panels and reflective glass planes that informed the material strategy of our final scheme. Another suggested a dynamic spatial rhythm through alternating solid and void that later influenced our massing strategy.

The AI-generated visuals also became a critical part of our design communication. They helped us visualize atmospheric qualities. For example, light at different times of day, or how the building might sit in a landscape setting that would have been time-consuming to render manually at this early stage. These speculative images became key discussion points as we refined our formal concept, eventually resulting in a cohesive series of faceted volumes that integrate transparency, reflection, and warmth in both their form and material palette.

Overall, the Stable Diffusion Workshop taught me to embrace AI not as a shortcut, but as a tool for deepening design exploration. It empowered me to work more fluidly between language and image, idea and form. This experience has encouraged me to continue integrating AI into my process—not to replace traditional skills, but to complement them and enhance the imaginative potential of early-stage design. Moving forward, I see AI as an essential part of the evolving designer’s toolkit: one that challenges, surprises, and ultimately sharpens our architectural thinking.